WARNING: This post deals with the technical implications of programming for Google Glass. If you are not familar with APIs, cloud technology, etc. you may want to leave. You've been warned :)

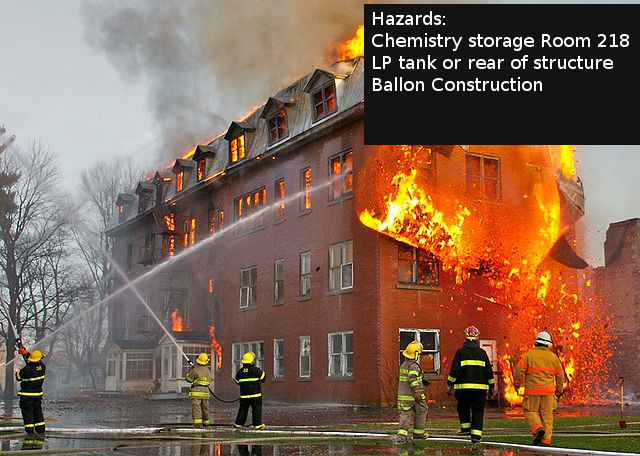

Google Glass could be a useful tool for firefighters. It can provide information in the form of text and pictures directly to the users field of vision. This is a rapid means of getting small amounts of information such as dispatch details, location information, or hazard alerts. I don't think Google Glass would be useful for interior firefighting(at least not in it's current form). However for a incident commander, safety officer, or other exterior role it could prove useful.

I have yet to receive a pair of Google Glass, but hopefully I will soon with help from some contributions (hint , hint. you can help by following this link). I do however understand how to program for it and can't wait to do so once I get a pair.

Currently there is only one 'official' way to make apps for Google Glass: the Mirror Api. Essentially Google hosts each Google Glass user's 'timeline' on there server. Cards can be inserted into the timeline using the mirror Api. The said cards will then appear on the users Glass and can be viewed, dismissed, etc. The mirror api is a RESTFUL api that is a standard in which modern web developers are very familiar.

Currently there is only one 'official' way to make apps for Google Glass: the Mirror Api. Essentially Google hosts each Google Glass user's 'timeline' on there server. Cards can be inserted into the timeline using the mirror Api. The said cards will then appear on the users Glass and can be viewed, dismissed, etc. The mirror api is a RESTFUL api that is a standard in which modern web developers are very familiar.My plan for using Glass in the fire service is to use Google's Appengine to host fire department's data in a cloud server. The data will be hosted in the datastore. When an incident is sent from the dispatch center the incident location and nature will be sent to the Google Glass. Location information for the address will also be sent to Google glass and immediately available to view.

Location information could also be accessed by selected a 'show close by information'. In this case I will be using appengine's Search Api to get the closest location information to the user and then sending the data to the Mirror Api.

Location information will be entered through an existing android application called FirefighterLog. This app will be receiving an update soon that will allow entering location info. Once information is entered into the app, it will be accessible by everyone in the department via Smartphone or Google Glass. I will also be looking to importing existing data from fire software, such as the FireHouse software.